PDQ Deploy and PDQ Inventory - Performance Setting Recommendations

Symptoms

- There's no specific behavior, but PDQ Deploy and/or PDQ Inventory seemed to have slowed down. Navigation within the software is sluggish.

- The GUI takes a while to load, sometimes crashes, and may take 3-4 attempts to successfully launch to program.

- Many deployments are queued and never actually deploy without needing to restart the PDQ Services or reboot the PDQ server.

- After a reboot or restarting services, the PDQ server may work for a few hours and then slow down or lock up again.

- You have random errors with endpoints trying to complete an Inventory scan. A scan will fail once, then work perfectly fine the next time the endpoint is scanned.

- You may see errors within the product or windows event logs (PDQ Event Logs) related to timeouts or integration errors. Integration errors are the result of PDQ Deploy and PDQ Inventory not being able to communicate with each other. This is usually an issue with credentials or performance settings causing communication issues.

- When deploying to a PDQ Inventory collection, you may receive an error: "Unable to verify collection membership condition. Integration with PDQ Inventory has been closed"

Overview

PDQ Deploy and PDQ Inventory were designed to give Administrators powerful tools to manage their environments. We error on the side of providing options rather than restricting configurations. Much like trying to install a jet engine into a lawnmower, customers can apply settings in good faith that appear to be beneficial but may cause unforeseen performance issues in other areas. We recommend the following settings to minimize and/or eliminate performance-related issues.

External Factors

PDQ Server Recommended (Not required) Specifications

- 4 processor cores (If using virtualization, we recommend 1 processor with 2 core, 4 cores, 8 cores, etc.)

- 8GB of RAM

- Fastest disk storage available. (NVME or SSD). If you have tiered storage in your datacenter, we recommend the fastest available to ensure good read/write performance for the PDQ databases.

- Approximately 50-100 GB of storage for package repositories and PDQ databases. (This definitely varies between customers)

- Windows Server 2016 or higher (Servers allow for more concurrent connections)

Security Products

Security Products can cause communication issues between the PDQ server and endpoints. We strongly recommend making sure you've got the proper exemptions in place:

PDQ Deploy

All Schedules

When it comes to scheduling deployments, we recommend staggering Interval and Daily triggers at different times when possible. For example, if you have 4 deployments that you want to deploy every night, then we recommend changing the start time to be staggered, from everything running at 8:00pm to something like this:

- 8:00pm Deployment 1

- 8:30pm Deployment 2

- 9:00pm Deployment 3

- 9:30pm Deployment 4

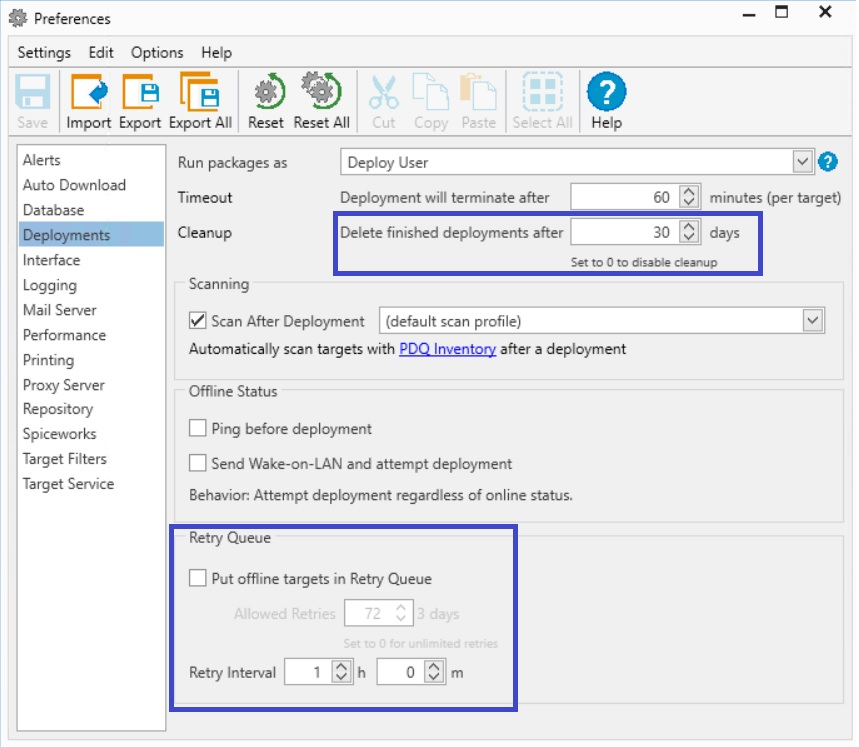

Options / Preferences / Deployments

As far as Deployment Cleanup, the default of 30 days should be fine for most environments. However, if you are seeing extended loading times for PDQ Deploy, you may consider changing this to a value of 7-14 days. (The lower the value, the better the performance.)

The Retry Queue is becoming antiquated if you have PDQ Inventory. We recommend NOT using the retry queue unless you only have PDQ Deploy and need that functionality. Instead, we recommend using deploy schedules targeting PDQ Inventory collections.

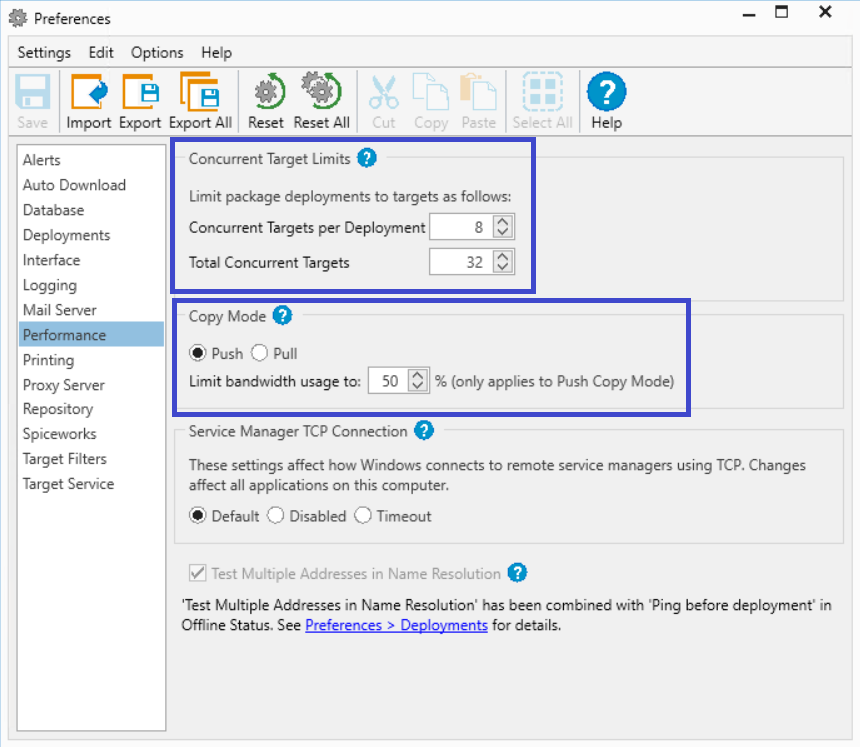

Options / Preferences / Performance

This is one of the most common things we see in support. There's a temptation to increase the values for concurrent deployments beyond their defaults and quickly overwhelm the PDQ server, with queued deployments and communication timeouts (integration errors) with PDQ Inventory. The idea is to reduce "burst" and have the PDQ server consistently working over time, rather than sudden and temporary resource demand that can lead to a range of issues.

Also, it's important to make sure you are using the right copy mode for your configuration. Use Push Copy Mode if you're hosting the package repository on the PDQ server and Pull Copy Mode if you are using a network share. Check out this article for more information.

PDQ Inventory

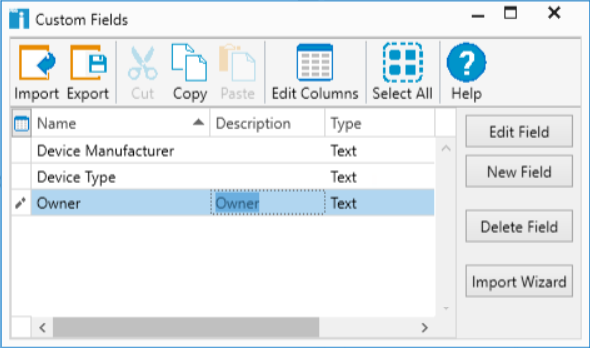

Custom Variables

It's important to note that when you create a custom variable, even if the value is empty, it will create that field for every endpoint record you have in the database and multiple created custom variables have the capability to quickly increase database size, potentially affecting performance.

Auto Reports

Just like deployments in PDQ Deploy, it's recommended to stagger the times that your Auto Reports run. Instead of 4 reports all running at 8:00am, schedule them to run a few minutes apart:

- Report A = 7:50am

- Report B = 8:00am

- Report C = 8:10am

- Report A = 8:20am

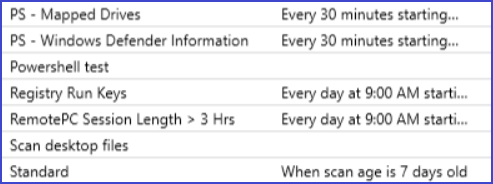

Options / Scan Profiles / Scheduling

When scheduling scan profiles, it's important to look at the frequency of how often you're running the scan as well as when you are running the scan. Scans constantly running and running at the same time can cause performance issues. What to change:

1. Combine scans when possible

2. Stagger the times of scans (Scan A at 9:00am, Scan B at 9:30am)

3. Switch from an interval scan (Every 4 hours) to scan age (When scan age is 4 hours old) will help to spread out scanning times on endpoints and can improve overall performance.

Options / Scan Profiles

With regard to your custom Powershell and WMI scanners, It's important to be specific on the data you are looking for. A generic query can return a large amount of data and quickly increase the size of the database, affecting overall performance.

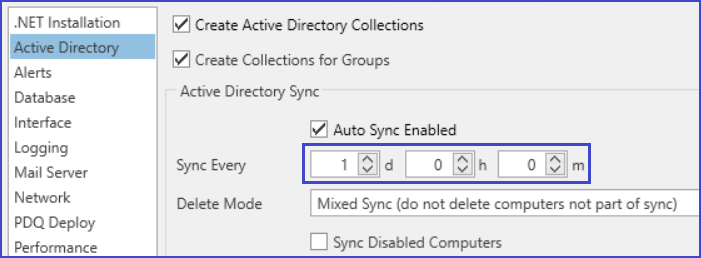

Options / Preferences / Active Directory

Active Directory scans can be resource-intensive and we've seen customers set the value as low as every 15 minutes. This can lead to performance issues with AD-Sync practically running 24 hours a day. We recommend once a day or at a minimum every 4 hours.

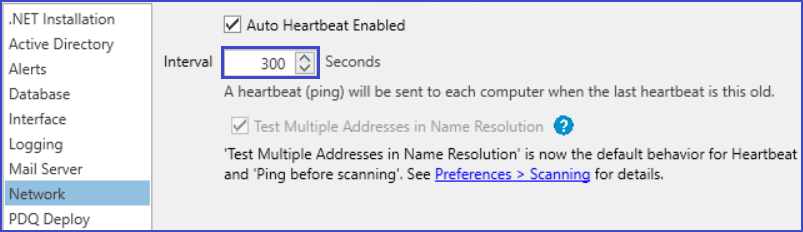

Options / Preferences / Network

The heartbeat is how often PDQ inventory attempts to connect an endpoint to see if it's online or offline. The default is 300 Seconds, but you can improve performance by increasing this number to something close to these values:

- 1000 endpoints = ~600 Seconds

- 2000 endpoints = ~900 Seconds

- 4000 endpoints = ~1500 Seconds

This reduces simultaneous connections which can increase overall performance.

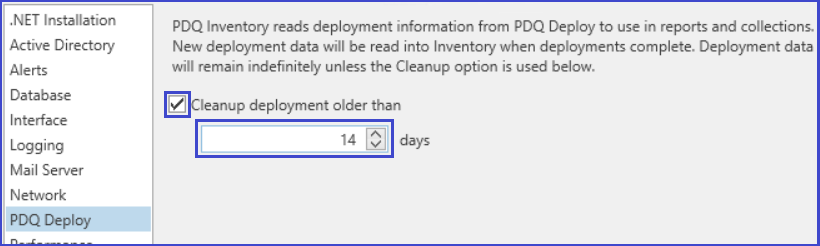

Options / Preferences / PDQ Deploy

Another opportunity to reduce PDQ Inventory database size is to enable and delete deployment records older than 14 days. Unless you have additional retention requirements, this value should give you 2 weeks of deployment history to reference:

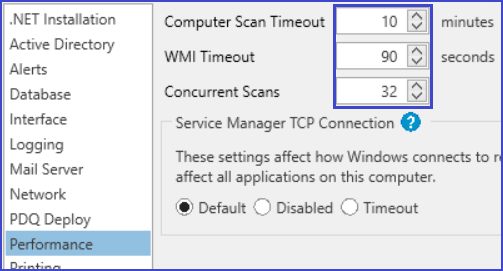

Options / Preferences / Performance

This is another common area where users will want to increase their Concurrent Scans and can overwhelm the server with too many endpoints trying to upload inventory scan information all at once. Longer timeouts can also lead to performance issues as well. Unless there's a special need, we recommend setting these values as defaults:

Optimizing Databases

Once you've made all your changes. Please close PDQ Deploy and PDQ Inventory, open an elevated (Administrator) command prompt (or Powershell window), and run the following commands:

pdqdeploy optimizedatabase

pdqinventory optimizedatabaseThis will help clean up data that is no longer needed after your new setting changes and reduce the size of your running databases, translating into improved performance on your PDQ server.